|

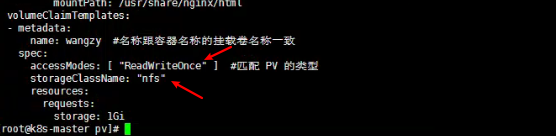

Making it pretty clear what’s going on and exactly what is noticeably absent from the Cluster. Warning FailedScheduling 5m26s (x2 over 5m26s) default-scheduler pod has unbound immediate PersistentVolumeClaims Running “ kubectl get events -w” is much more informative: LAST SEEN TYPE REASON KIND MESSAGEġ7m Warning FailedScheduling Pod pod has unbound immediate PersistentVolumeClaimsġ7m Normal SuccessfulCreate ReplicaSet Created pod: quaffing-turkey-mysql-65969c88fd-znwl9Ģm38s Normal FailedBinding PersistentVolumeClaim no persistent volumes available for this claim and no storage class is setġ7m Normal ScalingReplicaSet Deployment Scaled up replica set quaffing-turkey-mysql-65969c88fd to 1Īnd doing “ kubectl describe pod ” is also very useful: The above commands showed a pod that generally wasn’t happy or connectable, but little detail. Kubectl attach wise-mule-mysql-d69788f48-zq5gz -i Watch -d kubectl describe pod wise-mule-mysql watch -d 'sudo kubectl get pods -all-namespaces -o wide' This time, it should be able to claim all of the resources it needs with no tweaking or hints supplied…įirst a few notes on some of the commands and tools I used for troubleshooting what was wrong with the mysql deploy. In this post I will sort that out, by adding Persistent Storage to the Cluster and redeploying and testing the same Chart deployed via “ helm deploy stable/mysql“.

There was an obvious flaw in the example MySQL Chart I deployed via Helm and Tiller, in that the required Persistent Volume Claims could not be satisfied so the pod was stuck in a “Pending” state for ever. Kubernetes – from cluster reset to up and running

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed